Notes that connect themselves

Drop anything in.

Understanding comes out.

PDFs, links, transcripts, conversations. REM pulls out the people, the topics, and the threads. Every new save strengthens what came before — and starts to tell you what's missing.

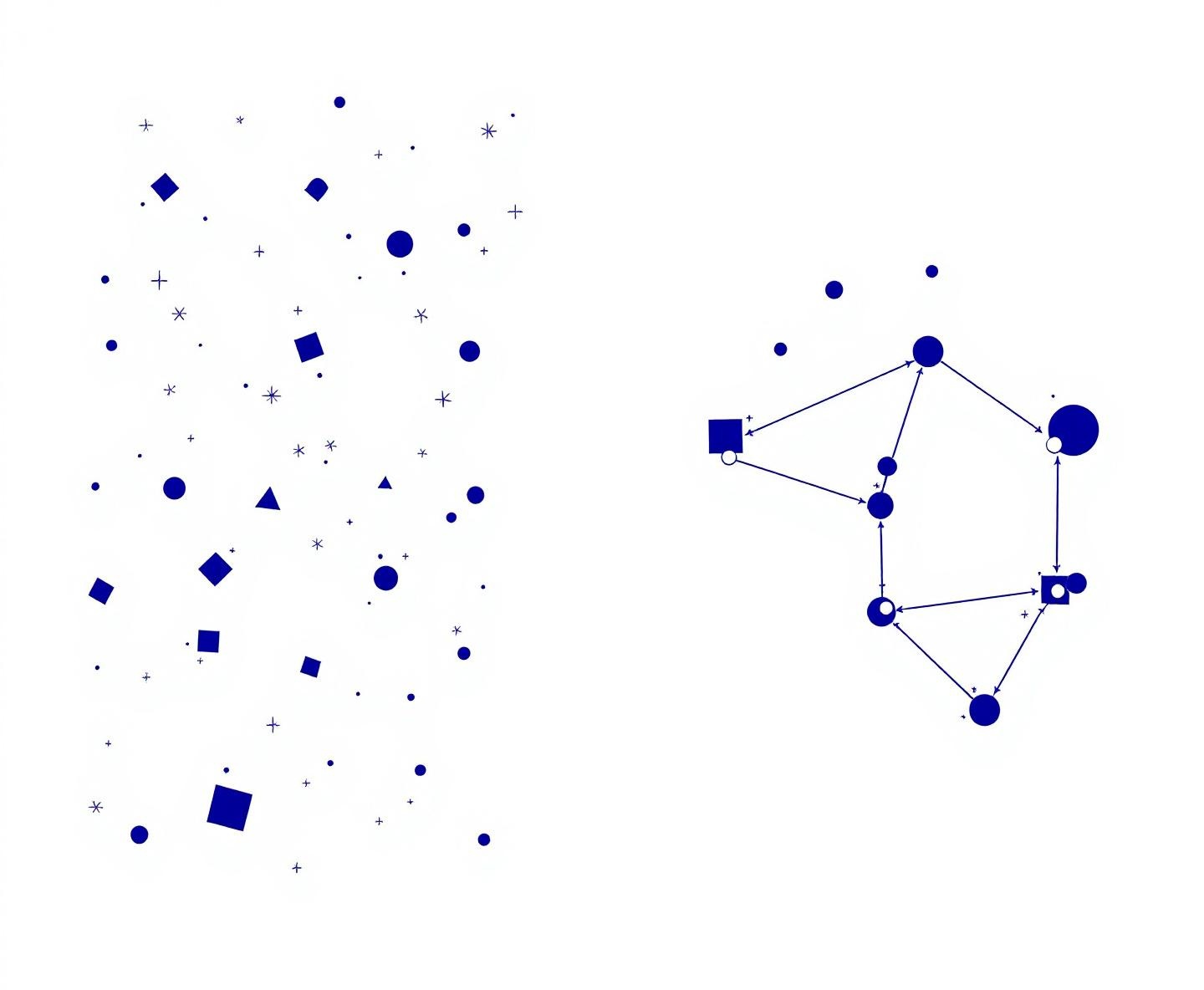

What 40 raw sources become

Most note apps leave you with isolated files. The Second Brain links them — people, ideas, sources — so what you saved last month shows up when it matters this week.

Drop any source. The AI does the rest.

PDFs, URLs, transcripts, images, conversations, code — the ingestion pipeline handles them all. Sources are archived immutably. Knowledge pages are generated automatically.

One source in. Eight to fifteen pages out.

Every source goes through an 8-step pipeline. A single 20-page PDF typically produces 8–15 interconnected knowledge pages with an average of 12 cross-references each.

Pick up where you left off.

A short summary of what just happened loads first — so REM always knows where you left off, what changed, and what matters right now. No crawling thousands of notes.

knowledge/ ├── index.md # Master catalog ├── hot.md # Recent context ← loaded first ├── entities/ # People, orgs, products ├── concepts/ # Ideas, patterns, frameworks ├── sources/ # One summary per ingested source ├── questions/ # Filed answers and research results ├── log.md # Chronological operation history └── overview.md # Executive summary of everything sources/ # Immutable source archive ├── articles/ # Web articles, blog posts ├── transcripts/ # Video/audio transcripts └── .manifest.json # Delta tracking (hash, ingested_at)

How your knowledge organizes itself

Three layers, each with a job. A short summary stays close so REM remembers where you are. An index maps everything. Pages hold the depth. REM reads exactly what it needs — nothing more.

Knowledge is compiled once and queried many times. REM reads exactly what's needed for your question — nothing more.

Knowledge Graph

Every entity, concept, and source becomes a node. Every cross-reference becomes an edge. The graph renders in real time with physics-based layout that scales to thousands of nodes.

From chaos to clarity

Three messy meeting notes go in. A structured, cross-referenced knowledge base comes out.

8 search modes. One API.

Different questions need different retrieval strategies. The Second Brain picks the right mode automatically, or you specify it.

Ask anything. Get cited answers.

Every answer cites the specific knowledge pages it drew from. Never fabricates. If there's a gap, it tells you what's missing and suggests a source to ingest.

Give it a topic. Get a research dossier.

The autoresearch engine runs 3-5 rounds of web research. Broad search first, then gap-filling, then synthesis. Everything gets filed into your knowledge base with citations and cross-references.

Type a topic from any device. REM does the reading. Cited findings, confidence scores, and a synthesis page filed to your brain.

Eight checks keep your brain clean.

The lint engine scans your entire knowledge base for structural issues, contradictions, dead links, and gaps. Run it daily or on-demand.

The complete second brain toolkit.

Everything you need to build a living knowledge base. Capture, connect, ask, refine.

Not another search box.

A search box re-derives answers from raw text every time. Second Brain compiles knowledge once into persistent, cross-referenced pages. Knowledge compounds — search doesn't.

| Feature | Search box | Note-taking apps | Second Brain |

|---|---|---|---|

| Knowledge compounds over time | — | — | Yes |

| Auto entity extraction | — | — | Yes |

| Contradiction detection | — | — | Yes |

| Bidirectional cross-references | — | Manual | Automatic |

| Source archive | — | — | Yes |

| Picks up where you left off | — | — | Yes |

| Autonomous research | — | — | 3–5 rounds |

| Knowledge health checks | — | — | 8 categories |

| iOS, web, voice capture | — | — | Yes |

Three steps to a second brain.

Drop sources, ask questions, see what's missing — the same brain across every device.

Anything goes.

Paste a link. Forward a thread. Drop a PDF. REM extracts entities, concepts, and cross-references automatically.

Cited answers.

Quick, standard, or deep. REM answers from your real notes, with sources, not vibes.

Health check.

REM flags orphans, dead links, and contradictions. Every source strengthens every other source.

A search box gets you results. REM builds a brain.

Stop storing documents. Start building understanding.

A second brain that understands what you save, connects it to everything else, and tells you what's missing. The longer you use it, the sharper it gets.